Introducing Social Engineering Identity Defense (SEID): A New Category for Securing Identity Workflows Beyond Authentication

A New Workforce Identity Security Category for Protecting the Workflows Attackers Exploit As Authentication Gets Stronger

New Identity Security Category

In this report, we are introducing a new category we call Social Engineering Identity Defense (SEID). The premise is simple: as enterprises harden authentication with MFA, passkeys, and stronger identity controls, attackers are shifting toward the workflows that sit around identity: help desk resets, account recovery, onboarding, internal approvals, and collaboration channels.

SEID is our framework for understanding and securing this emerging attack surface, where trust is not broken at the login screen, but manipulated through the business processes that grant, restore, or change access. We hope you find this research note detailed!

Author

Sean Sosnowski serves as the Research Director for Security Operations and Cloud Security at SACR, where he leads research on SOC strategy and operations, detection engineering, and the evolving role of automation and agentic AI in security workflows. Drawing on a decade of intelligence experience in the U.S. Marine Corps he has authored several analytic reports on emerging technologies and threats regarding sensitive national security and military operations.

Executive Summary

The next major identity breach may not start with a stolen password. It will likely take one of the following forms:

It will start with a phone call to the help desk.

A fake candidate in the hiring process.

A Slack message that looks like it came from an executive.

A request to reset MFA.

A support ticket that feels urgent enough to bypass normal procedure.

That is the uncomfortable reality facing enterprise security teams. The identity stack has become stronger at the login layer, but attackers have found a softer target: the workflows that can change identity without breaking authentication directly.

The center of gravity in identity security has shifted from the login prompt to the workflows around it. As enterprises deploy stronger, phishing-resistant authentication, attackers are moving toward the human systems they can still exploit. Account recovery, help desk operations, hiring and onboarding, and internal communications are increasingly targeted by attackers. These workflows were built for speed, continuity, and trust. They were not built to function as high-assurance security controls under adversarial pressure. That gap is now one of the most important blind spots in workforce identity security.

Generative AI makes this problem more dangerous, but it does not create it. The underlying weakness is structural. Deepfake voice, synthetic video, AI-assisted scripting, and contextual impersonation give attackers better tools to exploit the same issue, where many sensitive identity actions are still treated as routine support tasks rather than security-critical transactions. In practice, attackers do not need to beat passkeys or MFA head-on. They need to persuade a help desk agent to reset an account, convince a recruiter to advance a synthetic candidate, manipulate an employee through a trusted collaboration channel, or exploit a recovery path that lacks the rigor of primary authentication.

This report argues that workforce identity security must be reframed accordingly. The right model is not simply stronger authentication or more awareness training. It is a workflow-centric approach that extends identity assurance into the moments where access is granted, changed, delegated, or restored. We describe this model as Social Engineering Identity Defense (SEID), where a control framework built around verification in workflow, governed human touchpoints, cross-channel identity trust, and auditable decisioning. The goal is to reduce attacker leverage where human judgment and business processes still determine access outcomes.

The implication for security leaders is straightforward. Identity programs centered only on sign-in protection will increasingly miss the transactions that matter most. The next phase of identity defense will be won by organizations that treat recovery, support, onboarding, and internal approvals as part of the identity plane itself; reduce discretionary decisions in high-risk flows; and build systems that can verify, govern, and explain sensitive identity actions in real time. This is not a marginal extension of IAM. It is the beginning of a broader workforce identity security market built for the era of social engineering, deepfakes, and human-layer compromise.

Problem

This emerging market exists because the enterprise identity stack is still securing the wrong boundary. Most identity programs focus on sign-in, yet many of the highest-consequence identity decisions happen after the user leaves the login screen. The shift is not simply that social engineering has improved. Stronger authentication is pushing attackers into the workflow layer, where human judgment, fragmented context, and business urgency still determine outcomes. Passkeys and phishing-resistant MFA raise the cost of direct credential theft, but they do not eliminate recovery bypass, help desk manipulation, session abuse, or trusted-channel impersonation. In practice, attackers do not need to break the front door if they can persuade an operator to issue a reset, approve a device, advance a synthetic hire, or trust an internal message that triggers a legitimate-looking exception.

AI-assisted impersonation increases pressure on the people and systems already responsible for resolving edge cases quickly. The result is a workforce identity blind spot, where enterprises have hardened authentication, but the workflows that change identity remain easier to manipulate. The core problem is not a lack of awareness training or the arrival of one more phishing variant. It is that workforce identity security must extend beyond authentication into the human workflows, moving up the stack to applications where greater or increased trust is granted, exceptions are made, and access is ultimately decided.

Social Engineering as Workflow Abuse

For this report, social engineering in cybersecurity means the use of deception to cause a legitimate person to perform a security-relevant action on an attacker’s behalf. In enterprise identity environments, its most important form is workflow manipulation where the attacker targets decision points where access is reset, restored, approved, delegated, or trusted across channels.

This framing shifts the analysis from user awareness to operating control. The central question is how deceptive requests move through a business process, what evidence is evaluated, who has authority to approve the action, and whether the resulting decision can be explained after the fact.

What changed is not that attackers suddenly discovered new human weakness. It is that stronger authentication methods such as passkeys and phishing-resistant MFA have made direct compromise harder, pushing adversaries upwards in the stack toward the workflows beyond authentication instead of through it. Account recovery, help desk operations, hiring and onboarding, and internal communications have become prime attack surfaces because they can still authorize high-impact identity changes under conditions of urgency, ambiguity, and incomplete context.

The threat is compounded by Generative AI, which provides attackers with better tools like deepfake voice, synthetic video, AI-assisted scripting, and contextual impersonation. Attackers no longer need to beat MFA head-on, they seek to persuade a help desk agent to reset an account, convince a recruiter to advance a synthetic candidate, or manipulate an employee through a trusted channel.

Social engineering should therefore be treated as an operational security problem, not merely a training problem. Its enterprise form is now increasingly workflow-centric and identity-adjacent, focused on the abuse of authorized processes through impersonation, contextual pressure, and cross-channel coordination. The attacker’s goal is to convert trust into a legitimate action that grants access, changes identity state, or moves money and data.

Real Life Examples of Social Engineering

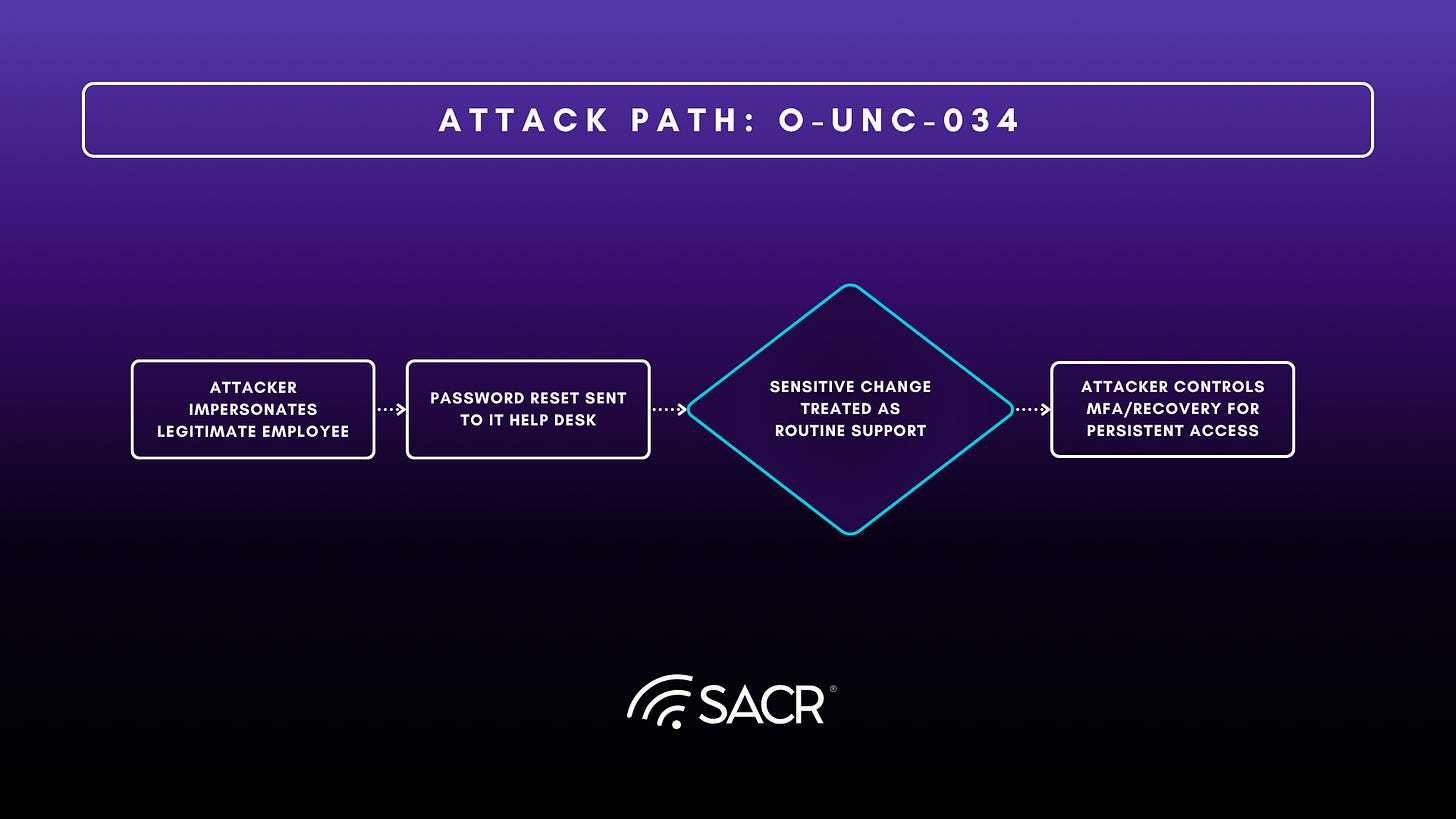

A strong real-world example of the SEID problem is Okta Threat Intelligence’s reporting on O-UNC-034. According to Okta, the group used social engineering of help desk staff to take over employee accounts and then manipulate data in payroll systems. The sequence is important because it shows that the attacker did not need to defeat phishing-resistant authentication directly. Instead, the attacker targeted the workflow that could reset access on the user’s behalf.

Okta reported that the actor impersonated legitimate employees and contacted the target company’s IT help desk to request password resets. After a successful reset, the actor established persistence by enrolling an attacker-controlled MFA method or manipulating recovery factors, including Okta Verify, voice call authentication, SMS, or security questions. From there, the actor pivoted into internal applications, including payroll platforms such as Workday, Dayforce HCM, and ADP, as well as CRM and IT service management systems such as Salesforce and ServiceNow. Okta also noted access to collaboration environments such as Office 365 and Google Workspace, which could support additional internal reconnaissance and follow-on abuse.

This is exactly the kind of identity attack path SEID is designed to address. The decisive failure did not occur at the login prompt. It occurred when a help desk workflow treated a sensitive identity change as a routine support action rather than a high-risk identity transaction. In SEID terms, O-UNC-034 illustrates a breakdown in Verification-in-Workflow, because a password reset and MFA change were approved without sufficiently strong identity proofing at the moment of change. It also illustrates the importance of Governed Human Interaction, because a front-line operator was placed in a position to make a high-impact identity decision under uncertainty and urgency.

The case also supports the need for Cross-Channel Identity Trust. Although the immediate action took place through the help desk, the actor’s objective extended beyond account recovery into payroll manipulation and broader access to enterprise systems. That is the core SEID insight where attackers increasingly exploit the workflows around identity rather than attacking authentication head-on. Once a support process can be manipulated into issuing a reset or accepting a new authenticator, the attacker can obtain legitimate-looking access through an apparently legitimate business process.

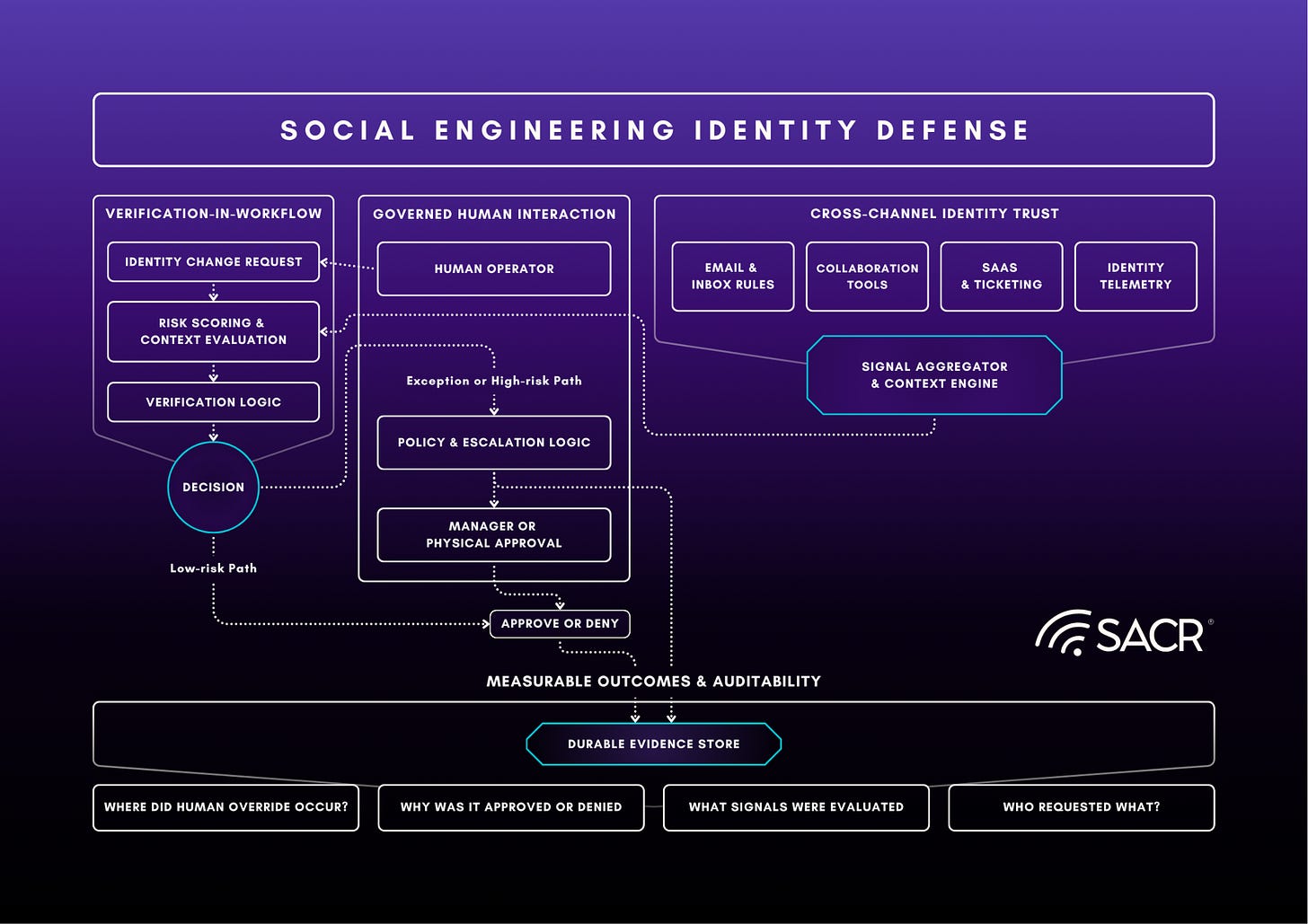

Social Engineering Identity Defense

SEID organizes this problem around the workflows where identity trust is created or changed. The framework has four linked requirements whereby we enhance verification of the requester inside the workflow, govern and inform workflow participants (through visible human indicators and scoring) inside the human touchpoints that can approve sensitive actions, carry trust signals across communication and identity channels, and preserve enough evidence to explain each decision after the fact. Together, these controls make identity-changing workflows more resistant to manipulation, because it informs human (or autonomous agents) of increased risk.

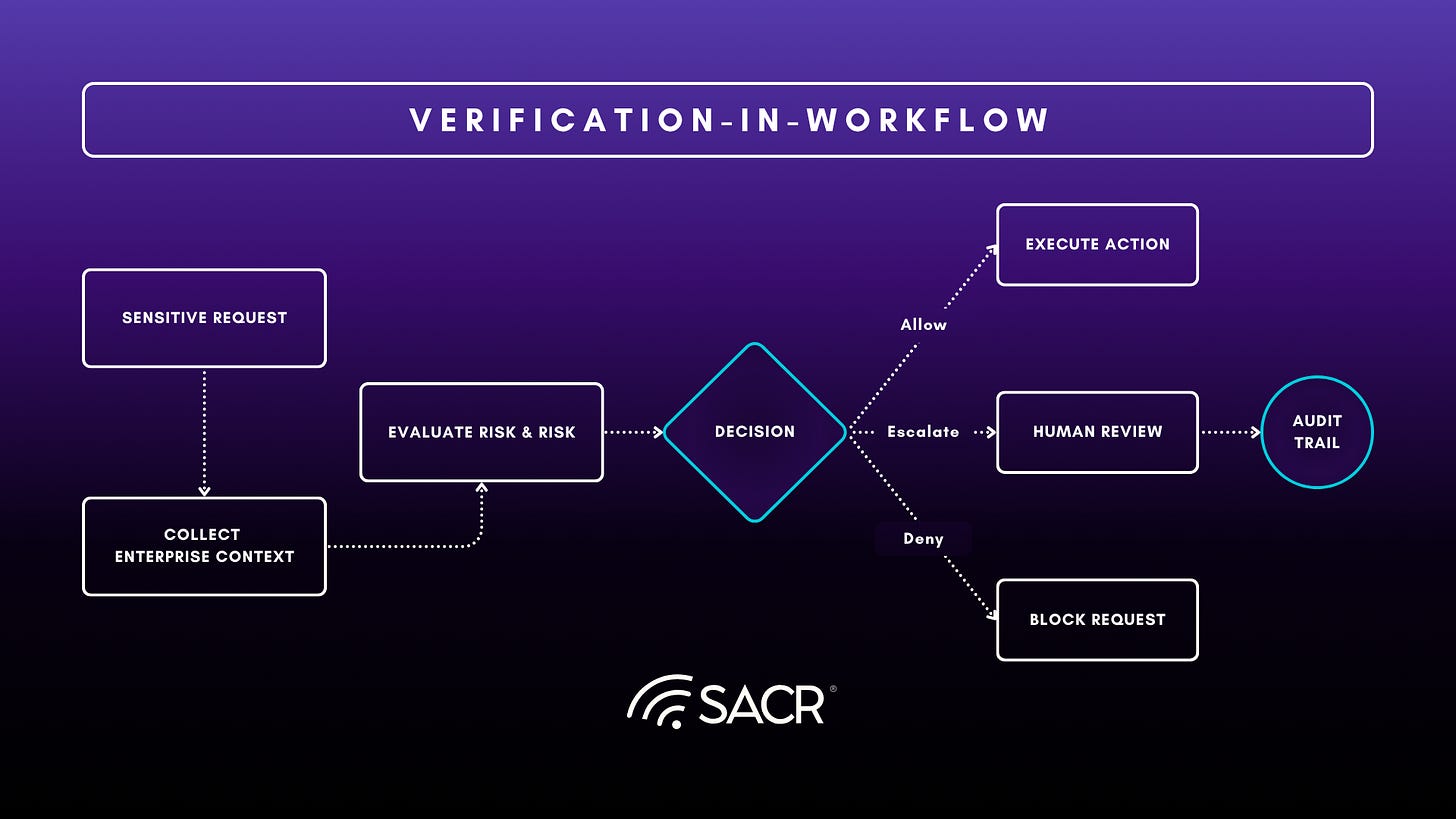

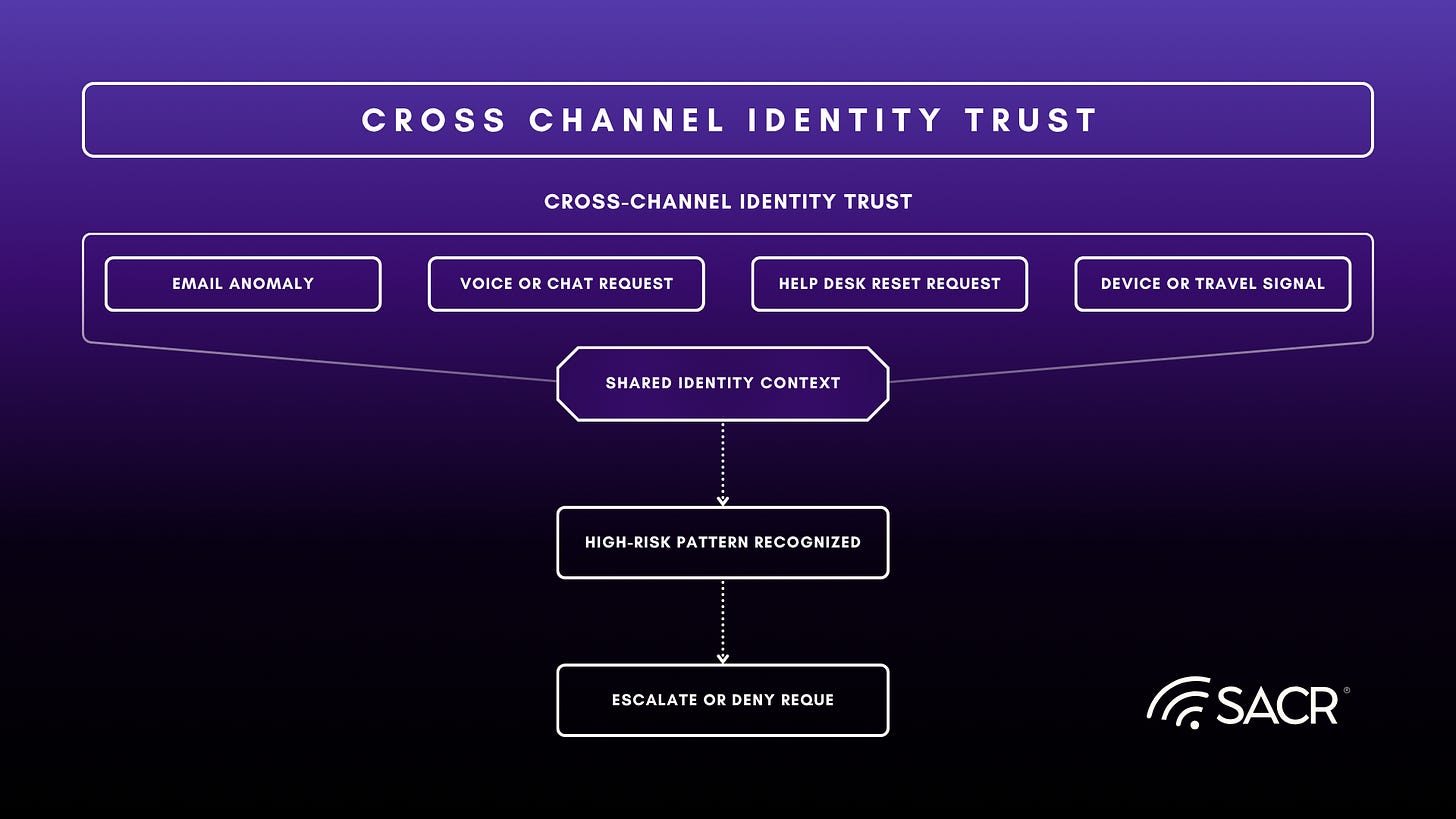

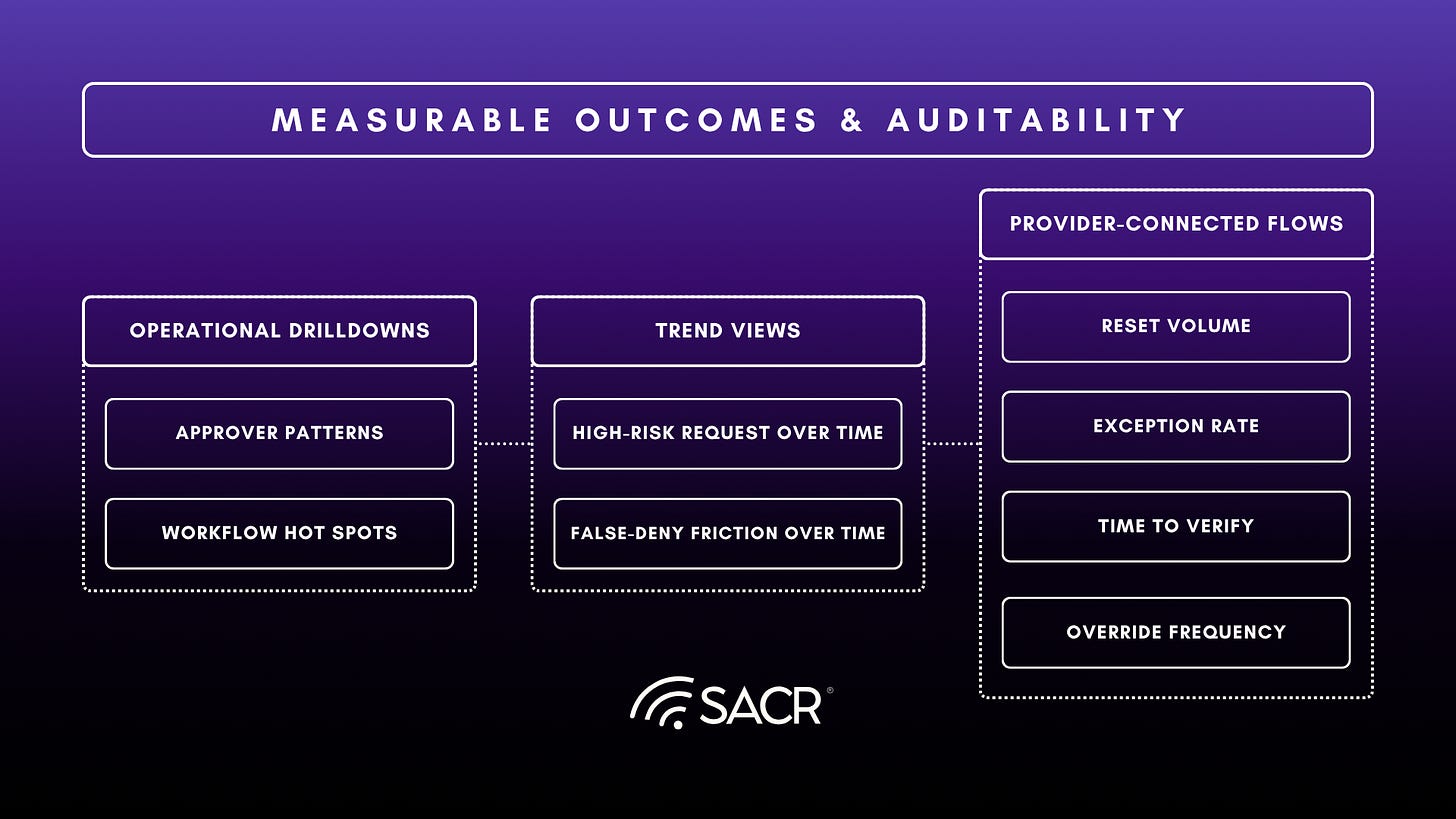

Each pillar owns a distinct decision problem. Verification-in-Workflow establishes whether the requester should be trusted at the moment of change. Governed Human Interaction defines how operators act when the request is sensitive or potentially ambiguous. Cross-Channel Identity Trust ensures that risk signals from one channel can inform decisions in another. Measurable Outcomes and Auditability preserve the evidence needed to reconstruct and improve the process.

Together, these pillars shift identity defense from authentication at the front door to trust enforcement across the workflows that actually govern workforce access.

SEID’s core scope is identity-sensitive workflow control. It applies to account recovery, MFA reset, device enrollment, onboarding credential issuance, privileged support actions, and high-risk internal approvals. Adjacent areas such as email security, ITDR, identity governance, and security awareness matter when they feed evidence into these workflows or help govern the decision itself. This boundary keeps SEID focused on the moments where trust changes state.

Verification-in-Workflow

Verification-in-Workflow brings identity assurance to the point where a sensitive action is requested. Password resets, account unlocks, MFA changes, and onboarding credential issuance should be treated as identity transactions because they can alter trust as materially as a successful login. The control objective is to ensure that the requester is verified before the workflow executes the change.

Architecturally, the request should pass through a policy-controlled verification step before execution. That step can evaluate device, location, ticket, employment, and recent activity context, then apply adaptive proofing and inform workflow participants based on risk. Low-risk requests can move quickly, while higher-risk requests can require stronger evidence, additional approval, or in-person validation. The value of the model comes from placing verification directly inside the workflow, where the sensitive identity decision is made.

Governed Human Interaction

Many of the most exploitable identity decisions are made by human operators. Help desk agents, HR, and other user-facing staff are frequently required to resolve issues that are deemed urgent, making them high value targets for social engineering attacks. The core of this pillar is about reducing the number of critical decisions reliant on improvisation a human operator has to make. The current state of human interaction allows for a single operator to reset a password based on information that is easily collected through basic open source intelligence collection. Conceptually in SEID, operators initiate the Verification-in-Workflow process to validate the identity of the user without relying on easily collectable information. The process either automatically approves the request, or moves on to a tightly controlled exception workflow, requiring manager approval, or physical presence of the user to reset credentials.

Governed Human Interaction also presents some cultural shortfalls, legitimate users and support teams alike expect easy and consistent workflows that deliver results. Controls that increase the friction between a legitimate user and a help desk will often be disregarded and not used to their full potential. Governed Human Interaction therefore has to be structured as a model that improves capabilities of help desk operations, not as a restriction. Properly implemented governance should reduce ambiguity for operators and lower the likelihood of them becoming a weak link in the identity verification process. The target outcome is a support system that resolves ordinary issues efficiently while hardening the process to exploitation.

Cross Channel Identity Trust

Cross-Channel Identity Trust exists because effective social engineers don’t stay inside one workflow or tool. Attacks may start in email, then move to voice, and other platforms as they gain trust within an organization. They can then execute their goals, taking advantage of the cross-channel trust they established by using multiple platforms as vectors. A security model that evaluates email, collaboration tools, and identity systems in isolation will miss the contextual indicators of effective social engineering.

Through Cross-Channel Identity Trust, SEID extends from transactional security to contiguous protections across an organization. Architecturally, Cross-Channel Identity Trust is a shared layer across email, collaboration tools, and identity infrastructure. This communication stack shares a signal to help identify anomalous activity across an organization, such as thread hijacking, excessive authority/urgency language, and abnormal requests that do not fit prior norms of interaction. Adding identity telemetry gives access to signals about impossible travel, new devices, abnormal inbox rules, session anomalies, token misuse, or suspicious post-authentication behavior.

The goal is to preserve a sequence of contextual information that can inform an identity centric decision. A password reset request arriving at a help desk after anomalous activity from the same identity can now be treated differently than the same request from a trusted user. Cross Channel Identity Trust builds the temporal and behavioral context on identities within an organization to inform the Verification-in-Workflow process.

Measurable Outcomes & Auditability

The final pillar, Measurable Outcomes & Auditability, turns sensitive identity actions into security events rather than vague support transactions. In many identity incidents, failure lies in post-incident ambiguity, no clear record of who initiated the request, what evidence was collected and considered, which policy was followed, and where human operators overrode system rules. SEID treats auditable metrics as a control surface, every action taken by human operators or autonomous systems is cataloged along with all of the contextual evidence used to justify the action.

Security teams should be able to reconstruct a complete workflow from request to decision with evidence as to why the decision was made. In practice, capturing data such as: requester, associated identities, communication method, risk score, verification methods, approvers involved, and final decisions. Integrating auditability into the workflow itself builds transparency and trust across an organization, enabling process improvement and increasing defensive capabilities against an attack.

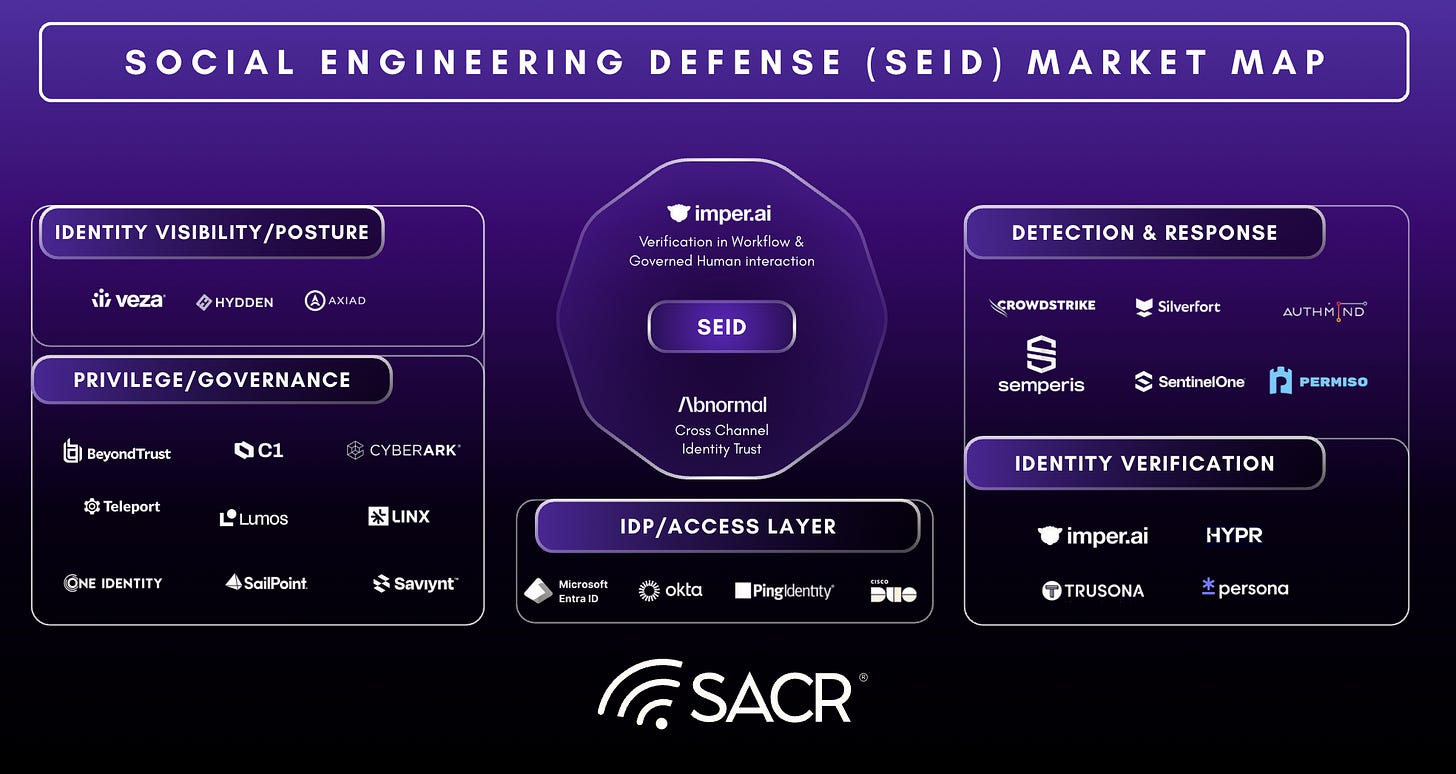

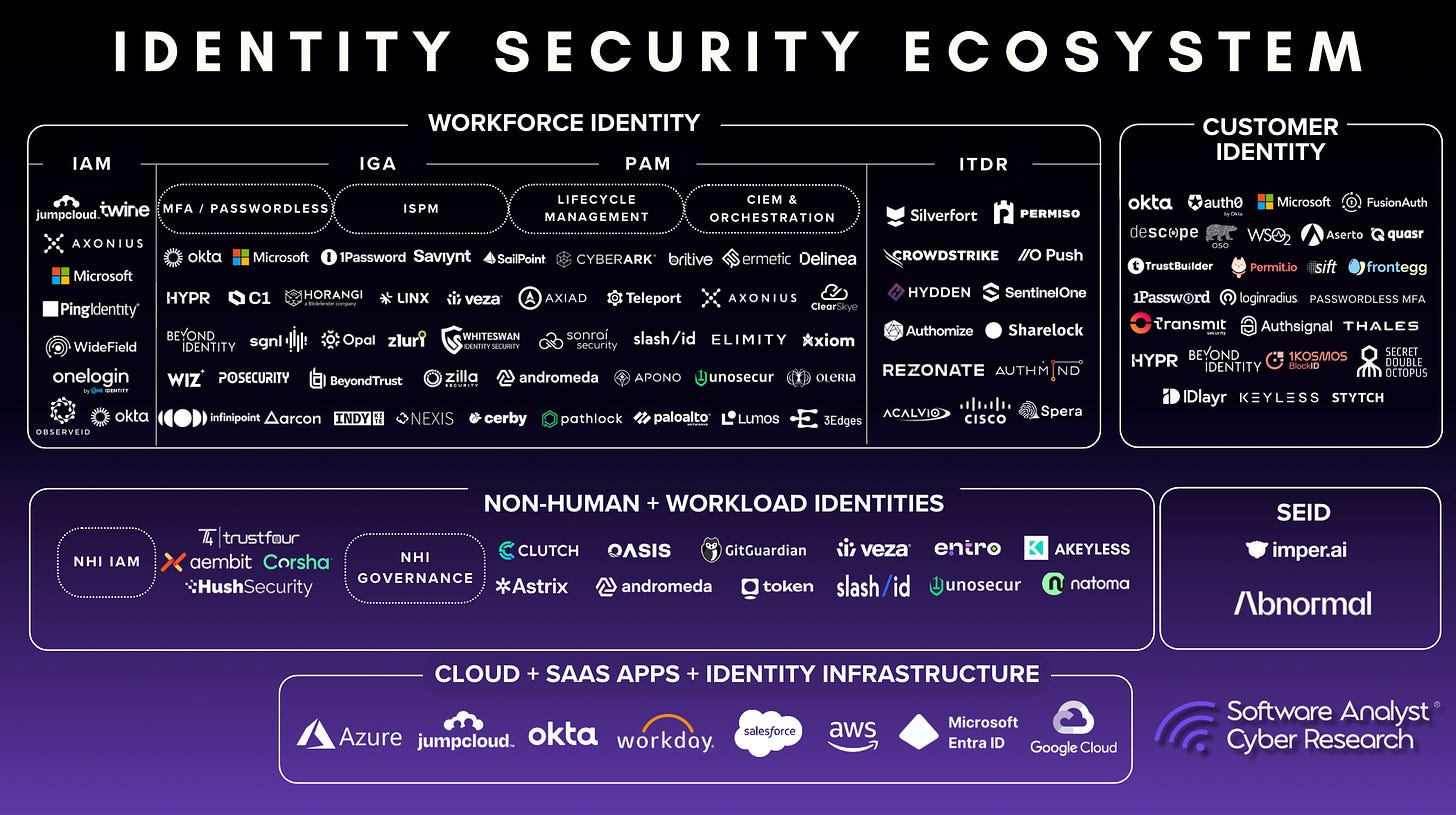

Framework as a Market Category

SEID should be understood as a category frame for controls that protect identity-changing workflows from social engineering. At first, this market will likely form through adjacent products, with a cleaner standalone category emerging as buyers demand controls that span recovery, support, onboarding, and high-risk approvals. Products count as SEID infrastructure when they improve at least one of the framework’s core requirements: point-of-change verification, governed human decisioning, cross-channel identity context, or durable decision evidence.

The strategic need is an integrated control pattern, because attackers exploit the handoffs between tools as much as they exploit any one tool. Buyers should evaluate SEID solutions by workflow coverage, evidence quality, policy control, operator usability, and audit completeness. The key question is whether the product reduces attacker leverage at the moment an identity-sensitive action is approved. SEID ownership will likely be cross-functional. IAM teams own several identity controls, SOC teams see account takeover and behavioral signals, IT service teams operate recovery workflows, and HR owns onboarding risk. A mature program needs a clear owner for identity-sensitive workflow decisions, even when the evidence and execution sit across multiple systems.

The business value of SEID comes from reducing the cost and frequency of compromised workflow decisions. Strong implementations should lower fraudulent reset risk, reduce unnecessary escalations, make help desk decisions more consistent, and give security teams cleaner evidence during incident response. These outcomes position the category as an operating model for safer identity service delivery.

Vendor Strategy

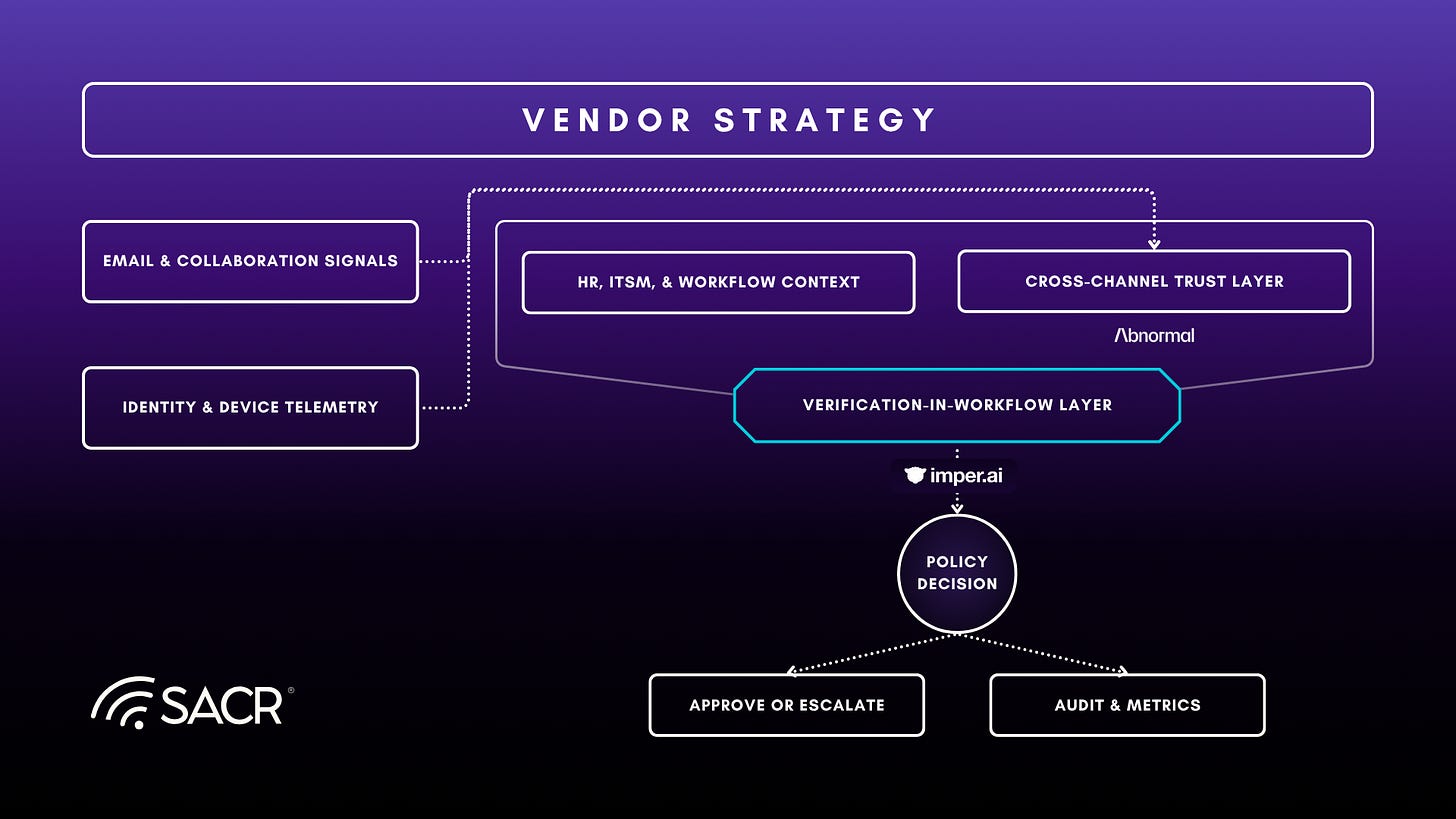

Constructing a complete SEID framework requires a multi-vendor approach, as no single solution can cover the full lifecycle of workforce identity workflows and the diverse attack surfaces. Successful security stacks must combine technologies that address both the verification-in-workflow layer and the cross-channel identity trust layer. For this reason, we examine a combined strategy with Imper AI and Abnormal AI, whose technologies map to how modern social engineering attacks often begin in communication channels and pivot to exploit core identity workflows.

imper.ai

Overview

imper.ai is relevant to SEID because it is focused on the workflow layer where identity assurance is often weakest. It is aimed at help desk recovery, hiring, onboarding, and other moments when trust is granted through process rather than through a standard authentication event. imper.ai presents itself as a workforce identity security platform operating between enterprise IAM controls and the human workflows that sit outside routine sign-in. That framing aligns closely with the SEID view that stronger MFA shifts attacker pressure toward recovery and exception paths.

This profile is especially relevant to Verification-in-Workflow because the product is centered on the decision point itself. It is designed for the moment when a password is reset, a candidate is advanced, or credentials are issued. That matters because the security consequence becomes operationally real at that point.

Product & Architecture

At the product level, imper.ai combines an impersonation detection engine with a contextual verification layer. The detection engine correlates network and location characteristics, endpoint and device attributes, and behavioral patterns into a real-time risk score. The contextual verification layer adds dynamic, work-based questions grounded in enterprise context rather than static knowledge checks, document uploads, or biometric enrollment. In practice, the model is trying to observe conditions an attacker has to manage while impersonating a real worker, then pair those signals with verification logic tied to the specific workflow.

That architecture maps cleanly to the SEID model because it operates inside high-consequence identity transactions. In the help desk recovery flow, a caller requesting a password reset or MFA change can be routed into a verification process that combines ticket context, device and network telemetry, and contextual questioning before the action is approved. The same logic extends into hiring and onboarding. Recruiters continue to work inside ATS or interview workflows while imper.ai adds risk scoring, contextual prompts, and a record across multiple stages. At onboarding, the platform can compare the credential-issuance session to an earlier hiring baseline where that baseline exists, or establish one at the point of first verification.

The outsourced help desk point is also analytically important. Third-party agents often have less organizational familiarity and less confidence about what normal behavior looks like for a given employee. A workflow-linked verification layer can reduce reliance on individual judgment by standardizing the decision around policy-backed evidence. That is why the product is relevant to recent attack patterns associated with contractor-mediated or help desk access paths, including public reporting around Caesars, MGM, and M&S.

The AI layer also appears to have been designed with adversarial misuse in mind. System prompts are separated from user input, a secondary model evaluates responses for prompt-injection patterns, output length is constrained, and suspicious sessions can be marked as failed without providing the requester direct feedback. These signals are used for risk assessment without collecting session content such as typed responses, documents, or communications, which helps address privacy and deployment concerns.

imper.ai’s public DPRK research adds a useful example of how the model is intended to work. In an April 13, 2026 post, four candidates were identified out of 600 pre-employment verifications whose device, network, and identity signals aligned with published DPRK IT worker tradecraft. The value of that case study extends beyond the attribution claim. It shows that the platform is attempting to detect risk in the network, device, and workflow layer during a live verification event, which is a layer that resume review, background checks, and one-time identity proofing often do not observe directly.

Competition & Positioning

imper.ai overlaps with several adjacent categories, including identity proofing, help desk security, hiring fraud controls, step-up authentication, and deepfake or meeting security tools. Its practical distinction is that it appears to join infrastructure signals with enterprise context at the point where a high-impact identity decision is made. That gives it a different role from products that are strongest at sign-in, strongest during document or biometric checks, or strongest inside a single meeting or media interaction.

That position gives imper.ai a distinctive place in the SEID landscape. Identity providers and MFA vendors reduce straightforward credential abuse, but they are often less informative once a user enters a recovery or exception path. Identity proofing vendors are typically oriented to discrete verification events rather than continuing workflow assurance. Deepfake or meeting security tools can contribute evidence inside a video or audio interaction, but they usually do not govern the downstream workflow where access is restored, credentials are issued, or a candidate is advanced. imper.ai is interesting because it is trying to sit inside those operational systems and carry risk evaluation through the decision itself.

The strategic limit is also clear. imper.ai depends on surrounding systems such as identity providers, HR platforms, and ITSM tools for authoritative context and final execution. Its role is better understood as a control layer inside those workflows than as a full replacement for the systems around it.

Implications for SEID

imper.ai is a strong example of Verification-in-Workflow and Governed Human Interaction. The product is built around the premise that sensitive identity actions should be mediated by contextual verification and policy rather than by static knowledge questions or agent discretion alone. It also has a meaningful fit within Measurable Outcomes and Auditability through the investigation views, verification records, and workflow-linked results.

The practical importance of imper.ai in this report is that it treats social engineering as an identity workflow problem. If the platform executes well, it could become a meaningful control layer for organizations that have already strengthened MFA but still expose high-impact exceptions through recovery desks, recruiting teams, and onboarding functions. The main diligence questions therefore become operational: how much policy orchestration is handled directly in product, how consistent the system remains across varied enterprise roles and uneven data quality, and how broadly the current model extends beyond the flagship workflows.

Abnormal AI

Overview

Abnormal AI is relevant to SEID because it approaches identity abuse from the surrounding behavior layer rather than from the authentication control alone. The company began in email security, where it built behavioral baselines around employees, vendors, and communication patterns to detect phishing, business email compromise, vendor fraud, and account takeovers. It now presents the platform as a broader behavioral AI system for protecting people and data across cloud and SaaS environments. That matters for SEID because many workforce identity attacks begin as trust manipulation in email or collaboration tools before they surface as a reset request, privileged action, or workflow exception.

This profile is especially relevant to the Cross-Channel Identity Trust pillar. Abnormal is centered on whether the surrounding sequence of communications, account activity, and relationship signals still looks consistent with the real person behind the identity. In that sense, it occupies an important adjacent layer in the SEID model: the system that can make a later identity decision more informed and more defensible.

Product & Architecture

At the platform layer, Abnormal’s differentiator is behavioral modeling across identities and relationships rather than static rule matching alone. Through API-based integrations into Microsoft 365, Google Workspace, and other connected SaaS applications, the platform ingests large volumes of contextual signals and builds per-identity and per-relationship baselines. In practice, that means it is not only evaluating whether an email or login looks suspicious in isolation, but whether a sequence of events deviates from how a user, vendor, or service normally behaves over time.

This model is visible in the company’s current product set. Its cloud email security offering focuses on phishing, vendor email compromise, and other socially engineered attacks that bypass traditional email defenses. Its account takeover products extend that behavioral approach into Microsoft 365, Google Workspace, and SaaS applications, where Abnormal correlates signs such as unusual sign-ins, mailbox-rule changes, abnormal location or device context, and suspicious downstream activity. Abnormal’s integrations with Crowdstrike are especially relevant to this report because they feed context from their in-depth behavioral analysis across multiple sources into identity and response workflows, reinforcing the verification-in-workflow pillar of SEID.

Abnormal describes its core advantage as cross-signal correlation over time. They argue that subtle events often do not cross a threshold on their own, but become high-confidence evidence when chained together across email, sign-ins, device context, vendor relationships, and subsequent SaaS behavior. That claim is analytically important for SEID because social engineering-driven identity abuse often appears benign when viewed as isolated steps. The problem becomes clearer only when communications context and post-authentication behavior are considered together.

From an SEID perspective, the key architectural point is that Abnormal treats communications and cloud activity as an evidence layer about identity trust. A suspicious reset or service request should be interpreted differently if it is preceded by thread hijacking, a compromised vendor relationship, impossible travel, mailbox-rule manipulation, or other behavior that diverges from the employee’s historical pattern. Abnormal’s value is in assembling that context earlier and more coherently than tools that only inspect a single channel or a single event type.

Competition and Positioning

For the purposes of this report, Abnormal overlaps with several adjacent categories. It overlaps with email security platforms, ITDR vendors, SaaS security tools, and a broader set of behavioral analytics vendors that claim to detect social engineering and account misuse. Its practical differentiator is the attempt to unify those surfaces around one behavioral model of people, relationships, and cloud activity rather than treating them as separate detection silos.

That position gives Abnormal a distinctive place in the SEID landscape. Vendors centered on authentication, MFA, or identity provider telemetry are often strongest at login and session controls but weaker at interpreting the communications and relationship context that frequently precedes compromise. Email-focused tools can identify phishing or impersonation but often stop short of building a broader identity-risk narrative across SaaS and service workflows. Abnormal is interesting because it is moving across that boundary. If the platform can reliably join communication behavior, account takeover evidence, and cloud activity into a coherent case, it starts to resemble a contextual trust layer for workforce identity decisions.

The strategic limit is also clear. Abnormal does not, by itself, solve the full Verification-in-Workflow problem. It does not replace authoritative identity systems, approval policy, or workflow orchestration. Its role is better understood as upstream context and autonomous detection that can improve the quality of identity decisions made elsewhere. In a mature SEID architecture, that may be valuable precisely because the system supplying context should remain distinct from the system granting final authority.

Implications for SEID

Abnormal is most relevant to the third SEID pillar, Cross-Channel Identity Trust, and secondarily to Measurable Outcomes and Auditability because it can generate contextual cases, risk signals, and audit data that other systems can consume. It also has emerging relevance to Verification-in-Workflow if its identity roadmap produces a stable way to feed behavioral attestation into help desk, reset, or recovery flows.

The practical importance of Abnormal in this report is not that it provides a complete SEID stack. It demonstrates where part of the market is heading: toward identity decisions informed by behavioral context drawn from email, collaboration, ticketing, and SaaS activity rather than by authentication status alone. That shift matters because many socially engineered identity incidents are decided in the period between the first trust signal and the final privileged action.

Key open questions to validate include how broadly and reliably Abnormal can correlate behavior across non-email systems at scale, how explainable its behavioral decisions remain during high-consequence identity workflows, and how well its account takeover and ticketing context can be operationalized by downstream workflow engines.

Practitioner Takeaways

Practitioners should begin by inventorying identity transactions that sit outside normal sign-in. Password resets, MFA changes, onboarding approvals, and other exception paths deserve the same review rigor as primary authentication because they can alter trust and access just as materially. In many environments, the most useful first move is not another front-door control. It is a stronger decision gate at the point of change, where a reset, recovery, or approval is about to be executed.

That shift also requires a different operating model for human-facing teams. Help desk agents, recruiters, and HR staff need bounded policy, clear escalation, and evidence-backed approvals so that high-risk decisions do not depend on improvisation. At the same time, organizations need context to travel across channels. Email, voice, collaboration, and service workflows should inform one another when a sensitive identity action is pending. If a team cannot explain who requested the action, what evidence was used, and why it was approved, the control is not yet mature enough. That is also why a layered vendor strategy matters. Workflow verification and cross-channel context may come from different tools, so architecture and data flow deserve as much attention as feature depth.

Conclusion

Adopting a SEID solution is critical because it gives security leaders a way to describe a problem that many organizations are already experiencing but often categorize in fragments. The help desk sees account recovery abuse. Recruiting sees synthetic candidates. Email security sees impersonation. Identity teams see pressure on MFA and exception flows. SEID connects those issues at the level where they share a common cause: sensitive identity decisions are still being made in workflows built for service delivery rather than adversarial trust enforcement.

As authentication continues to improve, the center of gravity in workforce identity security will keep moving toward those workflows. That does not reduce the importance of IAM or MFA. It expands what a complete identity defense program has to cover. Organizations that extend verification, governance, shared context, and auditability into recovery, onboarding, and other exception paths will be better positioned to reduce attacker leverage.

The practical next move is to treat identity-changing workflows as a core element and integrated capability of the future enterprise identity plane, then design controls accordingly. That is the core contribution of SEID and the reason the category is worth tracking.